Feature | Doctored image detection: a brief introduction to digital image forensics

The contemporary digital revolution has changed the way we access, manipulate and share information, but these advancements have also introduced critical security issues that shake people’s confidence in the integrity of digital media. Sophisticated digital technology and photo-editing software, such as Adobe Photoshop, are ubiquitous and have made the process of manipulating images to create forgeries a fairly accessible practice. As a result, trust in digital imagery has been eroded.

This situation is propelled by the excessive number of images floating virtually around us on the World Wide Web: Flickr has some 350 million photographs, with more than 1 million added daily (record 2007) (1), and Facebook has more than 50 billion uploaded cumulatively (record 2010). The authenticity of these photos becomes particularly critical when their impact spans political and social arenas. Digital forgery is indeed a nightmare to individuals (e.g. faked images of celebrities and public figures), societies (e.g. provocative fake images targeting certain ethnicity, religion or race), journalism, and even scientific publication.

But image manipulation is not a particularly new phenomenon and can be traced as far back as the invention of photography itself. Historically, documents forgery was done mechanically, but the massive increase in database storage and the introduction of e-Government means that documents are increasingly stored in digital form. This goes hand in hand with the continuous move towards paperless workspaces and poses high security risks when documents are transmitted over networks. Documents stored in this manner are prone to digital doctoring and this vulnerability has eventually triggered wide interest in research on the detection of doctored images. To this end, digital image forensics (DIF) is at the forefront of security techniques, which aims at restoring the lost trust in digital imagery by uncovering digital counterfeiting techniques.

Types of image forgery

Image tampering is defined as “adding or removing important features from an image without leaving any obvious traces of tampering” (2). There are various techniques for counterfeiting images and these can be classified into two broad categories.

Cloning, which is also known as copy-move forgery, are techniques that manipulate a given image by copying certain regions then pasting them into other regions within the same image. These techniques are typically used to conceal the existence of certain objects, features or individuals on an image. Cloning ranges from naïve copy-pasting to duplicate content as can be achieved with the Photoshop Clone Stamp Tool, to the application of more sophisticated algorithms such as the Content Aware Fill feature which was recently added on to Photoshop CS5. These algorithms are much harder to detect and often involve geometric transformations such as rescaling.

The second category of image tampering techniques is known as Image-Splicing, which is a process that involves collecting regions of interest (ROIs) from different images and assembling them onto a single image.

Techniques to counter attack forgery

Among the techniques employed to counterfeit digital forgery is what is known as the informed approach or the Active Analysis approach. This is where images undergo post-processing manipulations after they have been captured (e.g. via digital cameras or scanners) where a shield is created to protect them from being tampered with. This protection shield can take many forms. Some known forms of the informed approach are self embedding (3) where a compact digital copy of the image is encrypted and embedded into itself, digital fingerprint, where a compact representation of an image is digitally inserted into the host image while varying the degree of watermark opacity between visible, semi visible or totally invisible to the naked eye, and cryptography.

The main shortcoming of active analysis lies in the fact that its strength capitalises on pre-emptive protection mechanisms. Protection against tampering must therefore precede any attempt of forgery, which means that the millions of digital images that are already on the web cannot benefit from this approach.

Passive Analysis is used to overcome this shortcoming to cater for the huge number of images already out there. As apposed to the active analysis approach, passive analysis relies on blind classification of images based on internal image statistics without necessarily having access to priori embedded information. Several criteria have been proposed to realise this type analysis, some of which are listed below:

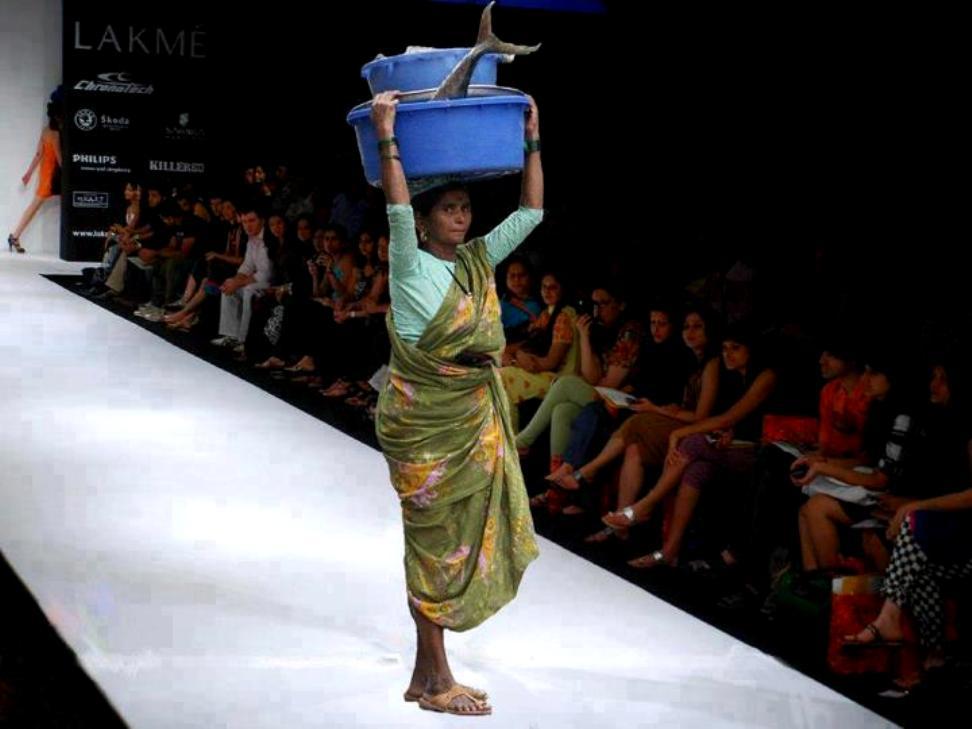

Physical and semantic inspection: One of the most straightforward techniques is to rely on human perception when inspecting images to identify incoherent or unusual features (see for instance Figure 1). Image tampering can be quite sophisticated however to elude the human visual system.

Light direction and inconsistency analysis: Light can be a valuable clue when identifying fake images. Naturally, light shining from a specific source should exhibit consistent absorption and reflection on solid matters of the same nature. Hence, inserting an object into an image from a different scene would likely distort this dependency. Light based analysis can be achieved at the level of 2D images or on their 3D re-projection. An example of of this is shown in Figure 2.

Detection of duplicate regions: Digital forgery can be exposed by detecting duplicated image regions, which is where portions of an image are copied and pasted from one region to another. The detection could be achieved in many ways, one of which is the comparison of image blocks, block by block, until highly correlated blocks are identified and further examinations are carried out to investigate the discovered discrepancies. This category of techniques involves a high degree of statistical analysis.

Figure 2. Fake image used by the politician Jeffrey Wong Su En. The doctored photo (left) shows the politician being knighted by Queen Elizabeth II. The original genuine image (right) depicts Ross Brawn receiving an OBE from the Queen. Notice the light inconsistency and intensiveness on the face of Mr. Wong and that on the face of the Queen.

Analysis of sensor inherited noise: The model of a digital camera can be detected from what is called a noise fingerprint in the images that it produces. This technique is often used in image forensics where the hypothesis goes something like this: When image splicing uses patches obtained from different images, it is highly likely that those patches had been captured by different cameras. The noise passed on to the host (faked) image will not match its existing noise pattern. This irregularity in noise distribution could be picked up by statistical measurements such that data could be stratified into two distinct regions based on the inherited noise. A proof of concept of this technique was carried out at Binghamton University (4). However, findings from this particular project are hard to generalise because their work was limited to famous camera brands. Additionally, unless the same optical lens is used, cameras from the same brand could still produce different patterns of sensor noise.

Retrieval of Camera Response Function (CRF): CRF, which is also known as Camera Response Curve, is a mathematical process used to reconstruct a camera’s property from a single image or a set of images of different exposures of the same subject matter. The process is usually depicted as a plot of the image’s irradiance against its intensity.

Double compression: Compression is a data encoding technique used to compact the data contained in an image in order to improve the efficiency of storage or transmission. Compression can be lossy or lossless. Lossy means that some parts of the image are discarded during compression, and so a reconstruction of the image from the compressed data will not yield the same image values. Lossless compression preserves all the data from an image, and so reconstruction will reveal the same original uncompressed image values.

Among other things, a data table is used to help compress and store an image. When this image is viewed it decompresses and will only re-compress again if the file content has changed. This double compression could be detected. However, whether a detection means tampering or not is sometimes ambiguous. In many cases, double compression raises false alarms when a legitimate image manipulation occurs that does not involve any kind of tampering. Nevertheless, when combined with one or more of the previous methods, double compressions can be a very powerful detection tool.

Project: Towards more robust and generalisable digital image forensics techniques

The aforementioned techniques are all part of the newly emerged field of digital image forensics. However, most of the efforts in the field are hard to generalise and still lack robustness against skilful counterfeiters who tend to adjust their strategies to subvert the latest advances in forensic techniques.

We are in the process of launching a new project that will contribute to the advancements of digital forensics. The aim is to examine existing methods for image forgery detection, evaluate them and eventually develop a new approach that we hope would be robust enough against several types of image forgery at the same time. We are working to create a benchmark database comprising a variety of cases (forged images) and controls (natural intact images) and to develop a software package encapsulating new routines/functions for detecting local copy-pasted forgery and other generic image-based tempering. The project will also include the organisation of a number of workshops and seminars on the subject.

The project involves several research institutes and is a collaborative effort between Algerian and Tunisian researchers (Prof. Kamel Hamrouni and Assist. Prof. Sami Bourouis from Université de Tunis El-Manar, and Ecole Nationale d’Ingénieurs de Tunis, SITI laboratoire, Tunisia, and Dr. Abbas Cheddad from Karolinska Institutet in Stockholm, Sweden). We are hoping to attain a high-profile study and to build a community of interest around our work to contribute towards pushing forward the research in this new field.

References

(1) Personal communication with Prof. Andrew Zisserman (Oxford University) at the Vision Video and Graphics (VVG) EPSRC Summer School 2007, University of Bath. UK.

(2) J. Fridrich, D. Soukal, and J. Lukas. Detection of copy-move forgery in digital images. In Proceedings of DFRWS, 2003.

(3) A. Cheddad, J. Condell, K. Curran and P. Mc Kevitt. “A Secure and Improved Self Embedding Algorithm to Combat Digital Document Forgery”. Signal Processing 89 (12)(2009) 2324-2332, Elsevier Science. Special Issue: Visual Information Analysis for Security.

(4) J. Lukás, J. Fridrich, and M. Goljan. “Digital Camera Identification From Sensor Pattern Noise”. IEEE Transactions on Information Forensics and Security, 1(2)(2006) 205-214.

7 Comments so far

ruhiPosted on 9:00 am - Aug 10, 2013

sir im doing project in block dividing method based on improved dct for image copy move forgery detection, so plz proivide me related papers and database of images if possible

AbbasPosted on 4:10 pm - Aug 10, 2013

Dear Ruhi,

There are plenty of papers which discuss DCT features for copy-move detection. Here I provide few papers which I find merit reading:

##################

Yanping Huang, Wei Lu, Wei Sun, Dongyang Long, Improved DCT-based detection of copy-move forgery in images, Forensic Science International, Volume 206, Issues 1–3, 20 March 2011, Pages 178-184,

Babak Mahdian, Stanislav Saic, Using noise inconsistencies for blind image forensics, Image and Vision Computing, Volume 27, Issue 10, 2 September 2009, Pages 1497-1503.

Zhouchen Lin, Junfeng He, Xiaoou Tang, Chi-Keung Tang, Fast, automatic and fine-grained tampered JPEG image detection via DCT coefficient analysis, Pattern Recognition, Volume 42, Issue 11, November 2009, Pages 2492-2501.

X. Pan and S. Lyu, “Region Duplication Detection Using Image Feature Matching”, IEEE Transactions on Information Forensics and Security (TIFS), 5(4):857-867, 2010.

##################

As for the image database, as far as I am aware there is no publicly available data set. There are images scattered here and there on the web which are commonly used in the literature and you need to track them down individually. If my grant proposal gets approved, I will start forming a comprehensive data set with the ground truth. Until then, you could create your own testbed comprising images that you alter yourself.

Cheers,

Abbas

diaa uliyanPosted on 6:19 am - Mar 2, 2015

Dear Mr. Abbas Cheddad,

I am working on Copy move forgery in digital image forensic. Can you mention to me some related works in forged medical images. and any database for forged medical images.

Thanks,

AbbasPosted on 9:29 am - Mar 2, 2015

Dear Mr. Diaa,

I am afraid there are not many instances of digital forgery happening in the medical field, at least to my knowledge. There were some occasional scandals in the field of medical science which carried along doctored microscopic images, however, I cannot guarantee they are of the type copy-move. Check the link below for some examples:

http://www.fourandsix.com/photo-tampering-history/tag/science

Regards,

Abbas

DiaaPosted on 4:59 pm - Mar 21, 2015

Thanks Mr.Abbas for your reply. I have checked the related papers of copy move forgery. They mentioned that forgery could happen in medical images but did not give examples.

Best wishes.

AmitPosted on 10:58 am - Apr 3, 2018

Dear Abbas Sir

Kindly provide some future directions in this field of Image Forensics for Research Purpose especially with Machine Learning

AbbasPosted on 2:24 pm - Apr 26, 2018

Dear Amit,

Sorry, I just noticed your message as this page does not notify me with any newly posted messages.

Well, the application of machine learning (including the most recent deep learning methods, such as convolutional neural network) in the field of image forensics is no longer a future vision. There are several research studies that tackle this problem.

About the Author