Feature | Signal and Image Multiresolution Analysis

Multiresolution analysis has received considerable attention in recent years from researchers in various fields. It is considered to be a powerful tool for efficiently representing signals and images at multiple levels of detail with many inherent advantages including compression, level-of-detail display and editing, as well as for practical applications such as pattern recognition, data mining and transmission.

At the core of multiresolution analysis is the numerical implementation of wavelet transform. Grasping the concept of wavelets is therefore key to understanding this type of analysis. To this is end, we recently published a book entitled “Signal and Multiresolution Analysis”, which aims to provide a general introduction as well as new insights into concepts behind multiresolution analysis.

At the core of multiresolution analysis is the numerical implementation of wavelet transform. Grasping the concept of wavelets is therefore key to understanding this type of analysis. To this is end, we recently published a book entitled “Signal and Multiresolution Analysis”, which aims to provide a general introduction as well as new insights into concepts behind multiresolution analysis.

Brief History

The 1970s saw attempts at finding an alternative to Fourier analysis – which often refers to the process of decomposing the complexity of a mathematical function into smaller pieces, a concept originally developed to study heat propagation. Towards the end of the 1970s and the beginning of the 1980s, Jean Morlet discovered highly temporally localized waves, which he named wavelets. Wavelets serve as a mathematical microscope because they can automatically adapt to the various components of a given signal: they are compressed for the analysis of high-frequency transients, which increase the microscope’s magnification and ability to examine finer details.

In 1984, the physicist Alex Grossman collaborated with Jean Morlet at the advent of the wavelet era, demonstrating the robustness of the wavelet transform and its property of energy conservation. Shortly afterwards, in 1986, the mathematician Yves Meyer added the finishing mathematical touches to this formidable saga.

Some years later, Stéphane Mallat provided the information processing community with a “filter” approach to wavelet transform in which a scaling function plays the principal role. The concept of multiresolution analysis materialized from the decomposition of a signal by a cascade of filters, which take the form of a pair of mirror filters for each level of resolution: a low-pass filter associated with the scaling function to provide approximations, and a high-pass filter associated with the wavelet to encode details. Thanks to her tenacity and dedication, physicist-mathematician Ingrid Daubechies made a significant contribution to the saga in 1988 with her proposal of readily exploited, iteratively constructed wavelets, with qualities such as orthogonality, compact support, regularity, and the existence of vanishing moments.

A fast algorithm applicable to signals and images was thus born, bringing the world of wavelets great acclaim at the end of the 1980s.

Flagship applications of wavelets

Today, the application of wavelets are concentrated on three general problems; analyses to extract relevant information from signals; noise reduction; and (image) compression.

Analysis to extract relevant information

As mentioned above, wavelets are used to analyse signals at different scale levels, effectively playing the role of a microscope or a numerical zoom. To achieve this, the signal is typically checked at different resolutions and the resulting coefficients of the wavelet transform encode the information located in the scope of the wavelet. If these coefficients are not sufficient to “read” relevant information, they are then transformed further. Some coefficients can be moved, rearranged or even cancelled, such that the inverse transformation (or synthesis) retains only a single area of interest (as, for example, is the case with images which we will discuss further below). In this manner, wavelet analysis allows the extraction of relevant information at any given level.

Noise reduction

Signals often contain noise. For example, an image captured by a digital device might be blurry in certain areas. The main challenge here is how to restore a useful signal when only a noisy version of it is available. Wavelet transform of the observed signal help overcome this challenge through adequate modification of the coefficients of the captured signal. A non-noisy version of a signal can in this case be obtained by simply inverting the transformations.

(Image) Compression

With the vast number of images we are inundated with everyday, image archiving becomes a challenge. Managing high volumes of image data through limited transmission channels are amongst the main obstacles in image archival or transmission. Once again, wavelets came to our aid because they help transform this flood of information into a very sparse representation that considerably reduce the amount of data that needs to be encoded. In this regard, the reader might be familiar with the JPEG2000 standard, which constitutes the most concrete and obvious advance since it compresses a given image to five thousandths of the original size.

Recent application areas of multiresolution analysis

Applications of multiresolution analysis span a vast array of domains and areas, too numerous to cover in this article – the interested reader should refer to the book for a more thorough review. Here, we describe the most remarkable of its applications which stem from the fields of biomedical engineering and telecommunications.

Biomedical engineering

Medical imaging and diagnostic techniques have seen spectacular developments as well as heavy investment in recent years. Most research hospitals have nowadays established neuroimaging centres equipped with functional PET (positron emission tomography) and MRI (magnetic resonance imagery) equipment.

These new technologies supplement more classic techniques that are also perpetually evolving, such as ultrasound, X-ray, tomography, electroencephalograpy (EEG) and electrocardiography (ECG). The signals analysed with these technologies are highly complex (involving, for instance, presence of anomalies of outliers, signal mixing, combination of associated modalities, inverse problems, and so on). The demands in terms of extracting relevant information are thus increasingly great, yet the image and signal processing techniques commonly used in these domains remain rudimentary. With its tremendous power and efficiency in signal analysis, wavelet-based multiresolution analysis (e.g. decomposition-reconstruction, feature extraction, segmentation, contour detection, compression, noise reduction, progressive transmission, and so on), can be combined with existing classification techniques to address present and future scientific and technological issues.

Telecommunications

Adaptive compression for sensor networks

Sensor networks are becoming increasingly indispensable, and optimisation currently remains the only way to address the three major difficulties they face: energy, transmission power, and storage capacity and computing power. However, increased network capacity on the one hand and reducing energy consumption on the other are contradictory needs.

Miniaturisation of equipment and the proliferation of connection types associated with the improvement of processing and memory capacity have led inevitably to sensor networks on a very broad scale so as to accomplish the most complex of human tasks. Of course, growing demand for mobile multimedia devices has also led to a dramatic increase in the flow of data exchanged between wireless communication systems. The use of “smartphones” contributes to transformation of communication methods to high-speed multimedia communication. Added to this is users’ expectations, which are also changing, expecting continuous connectivity both at home and on the move. Hence the question: how, from both the economic and the ecological perspective, can mobile operators meet the constantly increasing demands of data transportation? The book describes a potential answer, albeit partial, to theses demands – notably in terms of speed – of economical data transportation. The proposed solution consists of method for interpolating error-free decoded spatial information into corrupted areas, using a coupled multiresolution-geometry driven diffusion process.

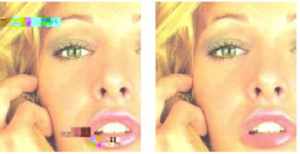

Masking image encoding and transmission errors

A possible problem for any communication system, including the IP protocol, is loss or possible degradation of the information transmitted through it. If no precautions are taken to ensure a certain quality of service, the result can have disastrous consequences for the quality of the transmitted signal.

In the case of images, the reason for such error sensitivity is often due to using different lengths of codes to encode the image. Although these codes can achieve very high compression rates, as is the case with JPEG 2000, they also introduce dependencies that contribute to spreading errors across the transmitted code, making it very hard to reconstruct the data correctly.

Various algorithms have been designed to reconstruct images so that they remain visually faithful to the original copy. However, these algorithms are based on masking or concealment rather than error correction, which works by reconstituting the integrity of one or more missing areas in an image using valid data available from neighbouring areas (see Figure 1 ). The processes involved in error masking procedures can also be extended to other multimedia data encoding norms (audio, video, streaming, mobile, digital radio, video games, digital television and high-definition media, etc.), which is known as MPEG-4 (Moving Picture Experts Group). This method uses wavelet based encoding, but is applicable to all multiresolution codecs. Its focus, for optimal error masking, is on reconstructing missing low-frequency coefficients because the loss of high frequency coefficients has much less impact on visual quality and can be easily recovered with other methods.

Conclusion

Signal and Image Multiresolution Analysis book aims to provide a general introduction as well as new insights into multiresolution analysis. It achieves this by making obscure techniques more accessible and by merging, unifying or completing the technique with additional concepts such as encoding, feature extraction, compressive sensing, multifractal analysis and texture analysis.

The book is aimed at industrial engineers, medical researchers, university lab attendants, lecturer-researchers and researchers from various specialisations. It is also intended to contribute to the studies of graduate students in engineering, particularly in the fields of medical imaging, intelligent instrumentation, telecommunications, and signal and image processing. Given the diversity of the problems posed and addressed, the book paves the way for the development of new research themes, such as brain–computer interface (BCI), compressive sensing, functional magnetic resonance imaging (fMRI), tissue characterization (bones, skin, etc.) and the analysis of complex phenomena in general.

About the Author